There is a popular adage about academic writing: “Writing is thinking.” The idea is this: There is a simple view of writing as just an expressive process – the ideas already exist in your mind and you are just putting them on a page. That may in fact be true some of the time, like for day-to-day emails or texting friends or whatever. But “writing is thinking” reminds us that for most scholarly work, the process of writing is a process of continued deep engagement with the world of ideas. Sometimes before you start writing you think you had it all worked out in your head, or maybe in a proposal or outline you wrote. But when you sit down to write the actual thing, you just cannot make it make sense without doing more intellectual heavy lifting. It’s not because you forgot, it’s not because you “just” can’t find the right words. It’s because you’ve got more thinking to do, and nothing other than sitting down and trying to write is going to show that to you.*

Something that is every bit as true, but less discussed and appreciated, is that in the quantitative sciences, the same applies to working with data. Data analysis is thinking. The ideas you had in your head, or the general strategy you wrote into your grant application or IRB protocol, are not enough. If that is all you have done so far, you almost always still have more thinking to do.

This point is exemplified really well in the recent Many Analysts, One Data Set paper. Twenty-nine teams of data analysts were given the same scientific hypothesis to test and the same dataset to test it in. But no two teams ran the same analysis, resulting in 29 different answers. This variability was neither statistical noise nor human error. Rather, the differences in results were because of different reasoned decisions by experienced data analysts. As the authors write in the introduction:

In the scientific process, creativity is mostly associated with the generation of testable hypotheses and the development of suitable research designs. Data analysis, on the other hand, is sometimes seen as the mechanical, unimaginative process of revealing results from a research study. Despite methodologists’ remonstrations…, it is easy to overlook the fact that results may depend on the chosen analytic strategy, which itself is imbued with theory, assumptions, and choice points.

The very end of the quote drives home a second, crucial point. Data analysis is thinking, but it is something else too. Data analysis is theorizing. And it is theorizing no matter how much or how little the analyst is thinking about it.

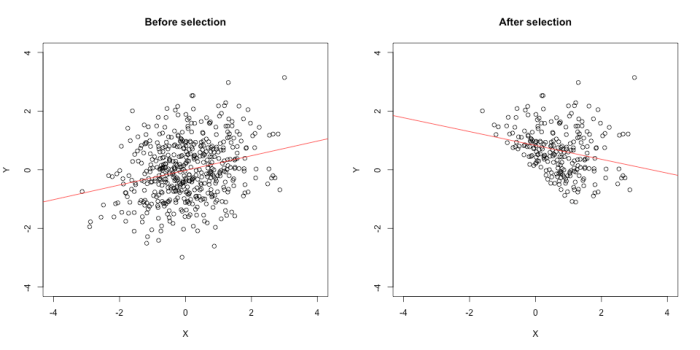

Scientific theory is not your mental state. Scientific theory consists of statements about nature. When, say, you decide on a scheme for how to exclude outliers in a response-time task, that decision implies a theory of which observations result from processes that are irrelevant to what you are studying and therefore ignorable. When you decide on how to transform variables for a regression, that decision implies a theory of the functional form of the relationships between measured variables. These theories may be longstanding ones, well-developed and deeply studied in the literature. Or they may be ad hoc, one-off implications. Moreover, the content of the analyst’s thinking may be framed in theoretical terms (“hmmm let me think through what’s generating this distribution”), or it may be shallow and rote (“this is how my old advisor said to trim response times”). But the analyst is still making decisions** that imply something about something in nature – the decisions are “imbued with theory.” That’s why scientists can invoke substantive reasons to critique each other’s analyses without probing each other’s mental states. “That exclusion threshold is too low, it excludes valid trials” is an admissible argument, and you don’t have to posit what was in the analyst’s head when you make it.

So data analysis decisions imply statements in theory-space, and in order to think well about them we probably need to think in that space too. To test one theory of interest, the process of data analysis will unavoidably invoke other theories. This idea is not, in fact, new. It is a longstanding and well-accepted principle in philosophy of science called the Duhem-Quine thesis. We just need to recognize that data analysis is part of that web of theories.

This gives us an expanded framework to understand phenomena like p-hacking. The philosopher Imre Lakatos said that if you make a habit of blaming the auxiliaries when your results don’t support your main theory, you are in what he called a degenerative research programme. As you might guess from the name, Imre wasn’t a fan. When we p-hack – try different analysis specifications until we get one we like – we are trying and discarding different configurations of auxiliary theories until we find one that lets us draw a preferred conclusion. We are doing degenerative science, maybe without even realizing it.

On the flip side, this is why preregistration can be a deeply intellectually engaging and rewarding process.*** Because without the data whispering in your ear, “Try it this way and if you get an asterisk we can go home,” you have one less shortcut around thinking about your analysis. You can, of course, leave the thinking until later. You can do so with full awareness and transparency: “This is an exploratory study, and we plan to analyze the data interactively after it is collected.” Or you can fool yourself, and maybe others, if you write a vague or partial preregistration. But if you commit to planning your whole data analysis workflow in advance, you will have nothing but thinking and theorizing to guide you through it. Which, sooner or later, is what you’re going to have to be doing.

* Or, you can write it anyway and not make sense, which also has a parallel in data analysis.

** Or outsourcing them to the software developer who decided what defaults to put in place.

*** I initially dragged my heels on starting to preregister – I know, I know – but when I finally started doing it with my lab, we experienced this for ourselves, somewhat to my own surprise.